Memory Primitives: The Infrastructure Layer That Determines Whether Your Agent Remembers or Hallucinates

The emerging memory infrastructure stack, from learnable CRUD operations to structural primitives, and the $46M+ market crystallizing around making agents stateful.

Three Takeaways

Memory is shifting from static storage to learnable infrastructure. A wave of recent research (AtomMem, Memory-R1, FluxMem, D-MEM) treats memory management as a dynamic decision-making problem, training agents via reinforcement learning to orchestrate their own CRUD operations. Early results show 5-10% improvements on long-horizon reasoning benchmarks with as few as 152 training examples.

The Forms-Functions-Dynamics framework gives us the first rigorous taxonomy for memory systems, and it exposes why most production deployments are incomplete. A 102-page survey from NUS and Renmin University proposes that every memory system must be evaluated across three axes: what form it takes (token-level, parametric, latent), what function it serves (factual, experiential, working), and how it evolves over time (formation, evolution, retrieval). Most production systems today nail one axis and ignore the other two.

The memory infrastructure market is crystallizing around four distinct wedges, each with different moat profiles. Mem0 ($24M Series A), Letta ($10M Seed), Cognee ($7.5M Seed), and Zep (YC W24) are each betting on different architectural primitives. The winners won't be the ones with the largest vector store. They'll be the ones who treat memory as a learnable, governable, auditable system. Total disclosed funding: $46M+.

The Memory Problem

We obsess over model scale, tool use, and reasoning chains while treating memory as a monolithic black box. But every production agent failure traces back to the same root cause: not how much the agent knows, but how it manages what it knows over time.

An agent that can't remember your last three interactions is a chatbot. An agent that remembers everything but retrieves the wrong context at the wrong time is worse: it's a confident hallucinator with a database. And an agent that can't forget is a compliance liability waiting to happen.

The agentic AI market hit $7.84 billion in 2025 and is projected to reach $52.62 billion by 2030. The gap is this: orchestration frameworks are commoditizing (LangGraph, CrewAI, AutoGen all converge on the same abstractions), while the memory layer, the infrastructure that makes agents stateful, personalized, and adaptive, remains fragmented, underspecified, and mostly hand-crafted.

That's changing. Over the last four months, a convergence of academic research and venture activity has started to formalize memory not as a feature you bolt onto an agent, but as a stack of composable primitives that must be designed, trained, and governed.

The research velocity tells the story: since October 2025, we count three comprehensive surveys, 20+ architecture papers, and at least five new memory frameworks, all focused on agent memory. Three papers dropped in the week before this newsletter went to press. This isn't a niche subfield anymore. It's an infrastructure category taking shape.

From Storage to Strategy: The Research Convergence

The Problem with Static Memory

Most production agent memory systems today follow what researchers call a "one-size-fits-all" workflow: every piece of information gets the same treatment. New input arrives, gets chunked, embedded, stored in a vector database, and retrieved via cosine similarity when it seems relevant. It's the same pipeline whether you're building a customer support bot or a quantitative trading agent.

The failure modes are predictable. Continuous memory fusion obscures fine-grained details. Merge too aggressively and you lose the specific dates, names, and figures you'll need later. Rigid forgetting schedules discard early-but-critical information; in long-horizon reasoning tasks, something from turn one might be the key to answering a question at turn fifty. And static retrieval can't adapt to task context. The same query in a compliance audit versus a strategy session needs fundamentally different memory behaviors.

The Learnable Memory Thesis

Four research threads published between August 2025 and March 2026 converge on the same insight: memory management should be a learned decision-making problem, not a fixed pipeline.

AtomMem (Renmin University & Tsinghua, January 2026) decomposes memory management into four atomic CRUD operations (Create, Read, Update, Delete) and frames the entire memory workflow as a Partially Observable Markov Decision Process (POMDP). The agent learns a policy over these operations using a combination of supervised fine-tuning and reinforcement learning. AtomMem-8B consistently outperforms static-workflow memory methods across three long-context benchmarks, discovering structured, task-aligned memory strategies that no human engineer designed. (GitHub)

Memory-R1 (LMU Munich, August 2025; revised January 2026) pushes the reinforcement learning angle further. It trains two specialized sub-agents: a Memory Manager that learns ADD, UPDATE, DELETE, and NOOP operations, and an Answer Agent that pre-selects and reasons over relevant entries. Both are fine-tuned with outcome-driven RL (PPO and GRPO). With only 152 training QA pairs, Memory-R1 outperforms strong baselines and generalizes across three benchmarks (LoCoMo, MSC, LongMemEval) and multiple model scales from 3B to 14B parameters.

FluxMem (February 2026) goes after the structure selection problem. Instead of committing to a single memory architecture, FluxMem equips agents with multiple complementary memory structures and learns to select among them based on interaction-level features. A three-level memory hierarchy (short-term episodic, medium-term consolidated, long-term semantic) with a Beta Mixture Model-based probabilistic gate replaces brittle similarity thresholds. Average improvements: 9.18% on PERSONAMEM, 6.14% on LoCoMo.

D-MEM (UC San Diego & CMU, March 2026) brings a neuroscience lens to the problem. Inspired by dopamine-driven reward prediction error (RPE) in the mammalian brain, D-MEM implements a Fast/Slow routing system: a lightweight Critic Router evaluates incoming stimuli for Surprise and Utility. Low-RPE inputs (routine conversation) get cached in an O(1) fast buffer. High-RPE inputs (factual contradictions, paradigm-shifting preferences) trigger the full memory evolution pipeline. This cuts token consumption by 80%, eliminates O(N²) write-latency bottlenecks, and outperforms baselines on multi-hop reasoning by learning when not to think hard about memory, not just how to manage it.

The thread running through all four: memory operations aren't just infrastructure plumbing. They're learnable policies that encode domain expertise. An agent managing a compliance audit trail should learn very different CRUD policies than one optimizing a trading strategy. Static pipelines can't capture this. Trained policies can.

The Performance Engineering Imperative

The learnable-memory thesis has a pragmatic counterpart: even the smartest memory policy is useless if it can't operate within production latency budgets.

AMV-L (Georgia Tech, February 2026) treats agent memory as a managed systems resource, similar to how operating systems manage virtual memory. It assigns each memory item a continuously updated utility score and uses value-driven promotion, demotion, and eviction across lifecycle tiers. The numbers: 3.1x throughput improvement and 4.2x latency reduction vs. TTL baselines, with the fraction of requests exceeding 2 seconds dropping from 13.8% to 0.007%. Predictable performance for long-running agents requires bounding the retrieval working-set size, not just optimizing the retrieval algorithm.

Hippocampus (HP Labs, February 2026) attacks the same problem from the data-structures level. Using compact binary signatures and a Dynamic Wavelet Matrix that compresses and co-indexes semantic search with exact content reconstruction, it achieves 31x retrieval latency reduction and 14x per-query token footprint reduction while maintaining accuracy on LoCoMo and LongMemEval. This is memory infrastructure engineering, not model research.

The gap between "works in a benchmark paper" and "works in a production agent serving hundreds of concurrent users" is exactly what these papers address. Memory latency at p99 is the next frontier, and the companies that solve it will own the performance layer of the memory stack.

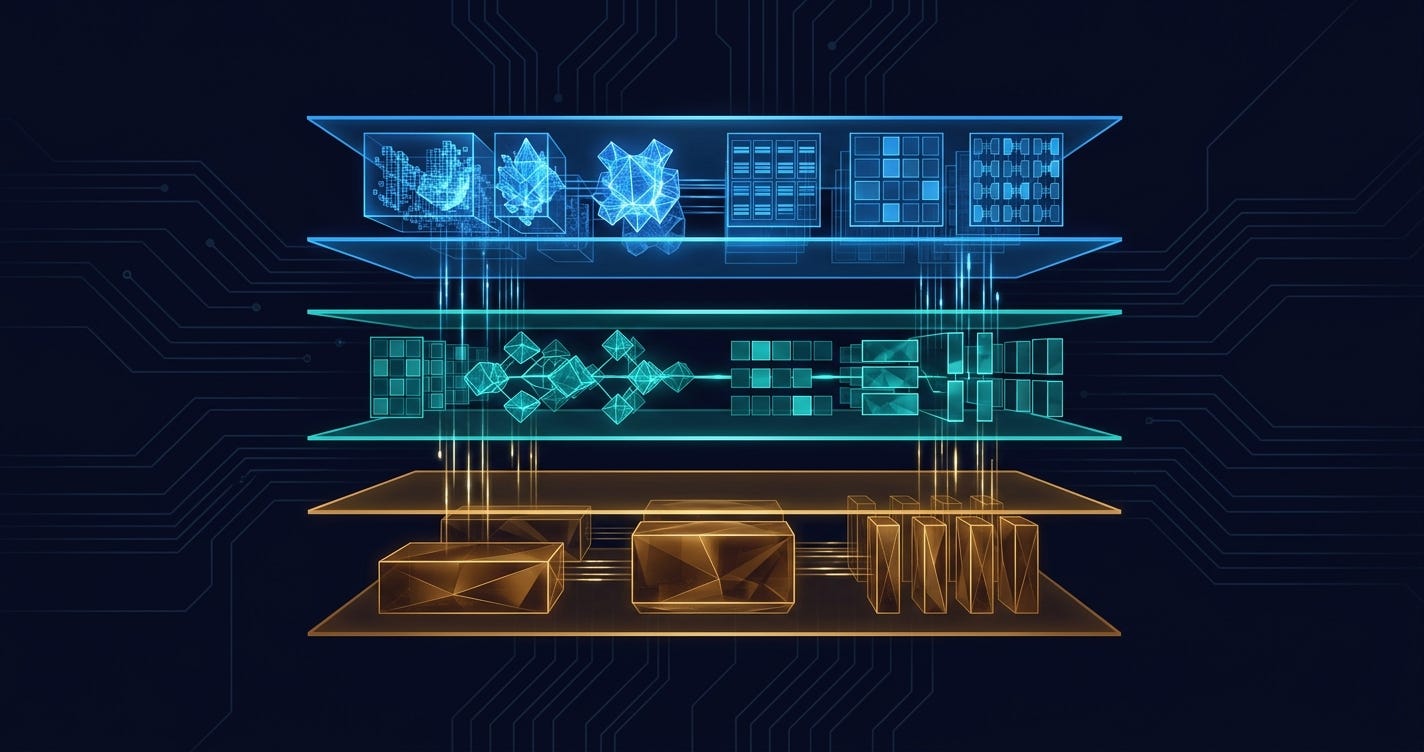

The Primitives Framework: Two Layers, One Architecture

The research converges on a two-layer model for thinking about memory infrastructure. Understanding both layers is essential for evaluating where companies and products sit in the emerging stack.

Layer 1: Atomic Operations (The Verbs)

These are the fundamental operations that any memory system must support. The key insight from AtomMem and Memory-R1 is that these operations should be learnable, not hard-coded:

The "Forgetful but Faithful" paper (Al-Baha University, December 2025) formalizes the Delete operation with particular rigor. It introduces the Memory-Aware Retention Schema (MaRS) with six forgetting policies (FIFO, LRU, Priority Decay, Reflection-Summary, Random-Drop, and Hybrid), each with complexity analyses and optional (ε, δ)-differential privacy guarantees. Across 300 simulation runs, the Hybrid policy delivers the best composite performance (~0.911) while maintaining high privacy scores.

In regulated industries, the Delete primitive isn't optional. It's a compliance requirement. GDPR, CCPA, MiFID II, and SOX all impose constraints on what an agent can remember, for how long, and with what audit trail. A learnable Delete policy that balances retention utility against privacy requirements is a product in itself.

Layer 2: Structural Primitives (The Nouns)

The comprehensive "Memory in the Age of AI Agents" survey (NUS, Renmin University, Fudan, and others; December 2025; 102 pages, 40+ authors) provides the most complete taxonomy to date. It examines memory through three unified lenses, what the authors call Forms, Functions, and Dynamics:

Forms: How Memory Is Stored

Functions: What Memory Does

Dynamics: How Memory Evolves

The survey explicitly argues that memory should be treated as "a first-class primitive in the design of future agentic intelligence." Not an add-on. Not a feature. A foundational architectural component on par with the model itself.

Every production memory system must make explicit choices across all three axes. Most current systems are strong on one dimension and weak on the others. Mem0 is strong on token-level factual memory. Letta is strong on working memory dynamics. Cognee excels at factual formation via knowledge graphs. No single product covers the full Forms x Functions x Dynamics matrix, and that's exactly where the market opportunity lies.

The Competitive Landscape

The memory infrastructure market is organizing around four architectural approaches, each representing a different bet on which primitive matters most.

Approach 1: Memory-as-a-Service (Flat Retrieval + Managed API)

Mem0, $24M Series A (October 2025), led by Basis Set Ventures with Kindred Ventures, Peak XV Partners, Y Combinator, GitHub Fund. Strategic angels from Datadog, Supabase, PostHog, and Weights & Biases CEOs.

Mem0's wedge is simplicity: add persistent memory to any LLM application with three lines of code. 14M+ downloads, 41,000+ GitHub stars, API calls growing from 35M in Q1 to hundreds of millions by year-end 2025. The product handles storage, retrieval, and basic consolidation as a managed service.

Moat assessment: Distribution and developer adoption are real. 41K GitHub stars is a significant lead. But the core retrieval layer (embedding + similarity search) is commoditizing fast. LangMem, which launched as part of LangChain's ecosystem in early 2026, offers comparable flat-store memory with 1.3K stars and the full weight of the LangGraph ecosystem behind it. Mem0's durability depends on moving up the stack toward learnable memory policies before the orchestration frameworks absorb basic memory as a feature.

Approach 2: Agent Runtime with OS-Inspired Memory

Letta (formerly MemGPT), $10M Seed (September 2024). ~21K GitHub stars.

Letta's bet is that memory can't be separated from the agent runtime. Inspired by operating system memory management, Letta implements a three-tier hierarchy: Core (fast, small, like RAM), Recall (cached conversation history), and Archival (cold storage for long-term knowledge). The agent itself decides what to promote, demote, or evict. Memory management becomes agentic behavior.

Moat assessment: The OS metaphor is the most architecturally ambitious play in the space, and it maps cleanly onto the learnable-operations thesis from AtomMem and Memory-R1. Recent research validates the direction: AgentSys (Washington University & NTU, February 2026) applies OS-style process memory isolation to agent security, spawning worker agents in isolated contexts where external data never enters the main agent's memory. It achieves a 0.78% attack success rate on the AgentDojo benchmark while actually improving benign utility. The risk: Letta requires you to run agents inside its runtime, which creates lock-in but also limits adoption compared to plug-in approaches like Mem0 or LangMem. If the market favors composable memory primitives over integrated runtimes, Letta's approach may be too opinionated.

Approach 3: Knowledge Graph Memory

Cognee, $7.5M Seed (February 2026), led by Pebblebed (Pamela Vagata, OpenAI co-founder + Keith Adams, Facebook AI Research founder). Angels from Google DeepMind, n8n, and Snowplow.

Cognee builds a self-improving knowledge graph from diverse data sources: PDFs, Slack, Notion, images, audio. Rather than flat vector retrieval, it extracts structured entities and relationships, enabling relational and temporal reasoning over the agent's knowledge base.

Zep (YC W24), $2.3M Pre-Seed (March 2024). 4 employees.

Zep takes the graph approach in a different direction with its Graphiti engine, which models temporal entity relationships. It tracks how facts change over time: not just "what does the agent know" but "when did it learn this, and has it changed since?"

Moat assessment: Knowledge graphs offer the strongest path to the Factual x Token-level quadrant of the primitives matrix. Cognee's investor profile (OpenAI + FAIR founders) signals insider conviction that structured memory will matter more than vector similarity. Zep's temporal focus addresses a gap no other player explicitly targets. Academic research backs the graph thesis: a comprehensive survey on graph-based agent memory (February 2026, 18 authors) covering extraction, storage, retrieval, and evolution mechanisms shows consistent improvements over flat-vector approaches, with an open-source resource collection at Awesome-GraphMemory. The risk for both: knowledge graph construction is brittle and domain-specific. Scaling beyond curated enterprise corpora to open-ended agent interactions is an unsolved problem.

Approach 4: Multi-Agent Shared Memory

Reload/Epic, $2.275M (February 2026), led by Anthemis with Zeal Capital Partners, Plug and Play.

Reload addresses a problem the other players largely ignore: multi-agent memory coordination. Epic provides a persistent shared memory layer that gives multiple agents a common understanding of what they're building and why. Think of it as the "system of record" for agent teams, handling onboarding, permissioning, and supervising agents regardless of model or vendor.

Moat assessment: This is the earliest-stage bet in the space, but potentially the most forward-looking. As agent architectures evolve from single-agent to multi-agent systems (the trajectory that Google's A2A protocol is betting on), shared memory becomes a coordination primitive, not just a storage layer. Research is catching up: G-Memory (PolyU, June 2025) introduces a three-tier graph hierarchy for multi-agent systems (insight graphs, query graphs, and interaction graphs) and achieves up to 20.89% improvement in embodied action success rates across five benchmarks and three MAS frameworks (GitHub). AMA (January 2026) uses coordinated agents (Constructor, Retriever, Judge, Refresher) to manage memory across multiple granularities, cutting token consumption by ~80% versus full-context methods. The risk: the multi-agent paradigm may not arrive fast enough to justify a dedicated infrastructure play.

Investment Activity

Total disclosed funding: ~$46M+

Notable signal: the investor profiles are shifting from pure AI/ML funds to infrastructure-focused VCs. Basis Set Ventures (Mem0) invests in enterprise infrastructure. Pebblebed (Cognee) was founded by OpenAI and FAIR alumni. Anthemis (Reload) focuses on financial services infrastructure. The money is betting on memory as plumbing, not as AI hype.

Additional strategic context: LangChain's LangMem SDK (open source, February 2026) adds competitive pressure from the framework layer. When the dominant orchestration framework ships its own memory toolkit, standalone memory companies face the classic platform-absorption risk.

Memory Security: The Next Infrastructure Layer

Memory isn't just a performance problem. It's an attack surface. As agents accumulate persistent state, their memory becomes a target for adversarial manipulation.

A-MemGuard (NTU, Oxford, Max Planck, Ohio State; 2025) identifies a critical vulnerability: adversaries can inject seemingly harmless records into an agent's memory that only activate in specific contexts. Corrupted outcomes then get stored as precedent, creating self-reinforcing error cycles that lower the threshold for future attacks. A-MemGuard's defense (consensus-based validation + dual-memory structure) cuts attack success rates by over 95%. (GitHub)

AgentSys (Washington University & NTU, February 2026) takes a different approach: hierarchical memory isolation inspired by OS process boundaries. A main agent spawns worker agents for tool calls, each running in an isolated context. External data and subtask traces never enter the main agent's memory. Only schema-validated return values cross boundaries through deterministic JSON parsing. On AgentDojo: 0.78% attack success. On ASB: 4.25%. Both while improving benign utility over undefended baselines. (GitHub)

SSGM (Jinan University, March 2026) addresses memory governance head-on with a framework that decouples memory evolution from execution. It introduces consistency verification, temporal decay modeling, and dynamic access control before any memory consolidation occurs. As memory systems shift from static databases to dynamic agentic mechanisms, the risks of memory corruption, semantic drift, and privacy leakage compound.

Memory security is to agent infrastructure what API security was to the SaaS stack: invisible until it isn't, then suddenly the thing that blocks enterprise adoption. No company is currently building dedicated agent memory security tooling. The first mover to productize memory integrity verification, adversarial detection, and governance-compliant retention policies has a clear wedge into regulated industries (financial services, healthcare, legal).

What's Still Unsolved

Cross-session memory consolidation at scale. Current systems handle single-session memory well. Multi-session, multi-agent memory consolidation, where an agent's knowledge must coherently evolve across thousands of interactions, remains an open problem. BMAM (January 2026) calls the failure mode "soul erosion": agents gradually lose behavioral consistency and temporal grounding across extended sessions. Their brain-inspired decomposition into episodic, semantic, salience-aware, and control-oriented subsystems hits 78.45% accuracy on LoCoMo, still well below human performance.

Multimodal memory fusion. Agents increasingly process text, images, audio, and structured data simultaneously. How to form, store, and retrieve memory across modalities, especially with temporal alignment between, say, a price chart and a news transcript, has no production-ready solution. NS-Mem (March 2026) shows the direction: a neuro-symbolic memory with episodic, semantic, and logic-rule layers achieves 12.5% improvement on constrained reasoning by combining neural retrieval with symbolic deduction. But this is still single-modality research.

Memory-aware model training. AtomMem and Memory-R1 train separate memory policies. The next frontier is co-training the base model and its memory system end-to-end, so memory strategies emerge as part of the model's reasoning rather than being bolted on.

Benchmarking standardization. LoCoMo, MSC, LongMemEval, PERSONAMEM, FiFA: each paper introduces its own benchmark. There's no equivalent of MMLU or HumanEval for memory systems, making cross-method comparison unreliable.

The forgetting problem in regulated contexts. GDPR's "right to be forgotten" and financial compliance requirements (MiFID II transaction record retention) create contradictory demands: remember everything for audit, forget everything on request. No current framework resolves this tension at the architectural level.

Opportunities & White Space

1. Memory Policy Engine for Regulated Industries

Build a configurable memory governance layer that sits between the agent runtime and its storage backend. Offer pre-built memory policies for specific regulatory frameworks (SOX, HIPAA, MiFID II, GDPR) with auditable CRUD logs, differential privacy guarantees, and configurable retention schedules. The wedge: compliance teams that currently block agent deployment because they can't audit or control what the agent remembers.

Investment thesis in one sentence: The company that makes agent memory auditable and compliant becomes the gatekeeper for every regulated-industry AI deployment.

2. Learnable Memory Optimization Platform

Productize the AtomMem/Memory-R1 approach: a platform that lets developers define memory operation spaces and train domain-specific memory policies via RL, optimizing for downstream task metrics (not just retrieval accuracy). Think "Weights & Biases for memory policies," with experiment tracking, policy evaluation, and A/B testing of memory strategies.

Investment thesis in one sentence: If memory management becomes a trainable optimization problem, the platform that makes training and evaluating memory policies accessible captures the workflow.

3. Cross-Modal Memory Fusion Layer

Build infrastructure for multimodal memory that handles temporal alignment, cross-modal indexing, and unified retrieval across text, images, audio, video, and structured data streams. The current approach (embed everything into the same vector space) loses modality-specific signal. A purpose-built fusion layer preserves cross-modal relationships.

Investment thesis in one sentence: As agents become multimodal, the memory layer that natively understands relationships across modalities becomes the connective tissue for next-generation agent architectures.

4. Memory-Native Agent Observability

Build monitoring and debugging tools specifically for agent memory systems: drift detection (when memory consolidation degrades knowledge quality), retrieval quality tracking, memory utilization metrics, adversarial injection alerts, and cost attribution (which memory operations consume the most tokens/compute). Datadog didn't just add APM to servers. It built a category around observability. Agent memory observability is equally underserved.

Investment thesis in one sentence: You can't manage what you can't measure, and today nobody is measuring agent memory health.

What's Commoditizing vs. What Has Moats

Commoditizing:

Basic vector-store memory (embed, store, retrieve). Every orchestration framework now ships this as a default capability. LangMem's launch accelerates compression.

Simple conversation history persistence. Session replay is a solved problem.

Generic summarization-based memory consolidation. LLM-powered summarization is a commodity.

Durable moats:

Learnable memory policies (AtomMem/Memory-R1 approach). Training infrastructure for domain-specific memory strategies requires both research depth and production engineering. Hard to replicate.

Temporal knowledge graphs (Zep/Graphiti, Cognee). Structured memory with provenance tracking is architecturally distinct from vector stores and requires graph-native engineering.

Memory governance and compliance tooling. Regulatory expertise plus technical implementation creates a wedge that's defensible against both orchestration frameworks and model providers.

Multi-agent memory coordination (Reload/Epic). If multi-agent becomes the dominant paradigm, shared memory primitives become critical infrastructure.

Acquisition targets:

What to Watch

Q2 2026: Look for Mem0 to announce enterprise features like role-based access control, audit logging, and compliance certifications to defend against LangMem's encroachment from the framework layer. If they don't ship governance features by mid-year, the "three lines of code" wedge may not be enough.

H2 2026: Watch for the first production deployment of RL-trained memory policies outside of academic benchmarks. AtomMem's GitHub repo is public (github.com/RUCBM/AtomMem). The team that ships a commercial implementation of learnable memory, even if it's just for a single vertical, will validate the entire category.

2026-2027: The model providers will make their move. OpenAI's Responses API already includes basic memory hooks. Anthropic's Claude has persistent memory in consumer products. When model providers ship first-party memory infrastructure for enterprise agents, the standalone memory companies will need to have either moved up the stack to governance and compliance, or built enough integration depth that ripping them out is harder than adopting the model provider's default.

The leading indicator: Enterprise procurement teams asking "how does your agent handle data retention?" instead of "can your agent use tools?" When memory governance becomes a line item in RFPs, the infrastructure market will be real.

The agents that win won't have the biggest context window. They'll have the most adaptively primitive memory system, one that knows what to remember, what to forget, and how to prove it did both correctly.

Research cited:

Learnable Memory Operations

Huo et al., "AtomMem: Learnable Dynamic Agentic Memory with Atomic Memory Operation" (Jan 2026)

Yan et al., "Memory-R1: Enhancing LLM Agents to Manage and Utilize Memories via Reinforcement Learning" (Aug 2025/Jan 2026)

Lu et al., "FluxMem: Choosing How to Remember" (Feb 2026)

Song & Xin, "D-MEM: Dopamine-Gated Agentic Memory via Reward Prediction Error Routing" (Mar 2026)

Taxonomies & Surveys

Hu et al., "Memory in the Age of AI Agents" (Dec 2025)

Yang et al., "Graph-based Agent Memory: Taxonomy, Techniques, and Applications" (Feb 2026)

Du, "Memory for Autonomous LLM Agents: Mechanisms, Evaluation, and Emerging Frontiers" (Mar 2026)

Performance Engineering

Bamidele, "AMV-L: Lifecycle-Managed Agent Memory for Tail-Latency Control" (Feb 2026)

Li et al., "Hippocampus: An Efficient and Scalable Memory Module for Agentic AI" (Feb 2026)

Memory Security & Governance

Wei et al., "A-MemGuard: A Proactive Defense Framework for LLM-Based Agent Memory" (2025)

Wen et al., "AgentSys: Secure and Dynamic LLM Agents Through Explicit Hierarchical Memory Management" (Feb 2026)

Lam et al., "Governing Evolving Memory in LLM Agents: The SSGM Framework" (Mar 2026)

Forgetting & Privacy

Alqithami, "Forgetful but Faithful" (Dec 2025)

Cognitive & Bio-Inspired Architectures

Li et al., "BMAM: Brain-inspired Multi-Agent Memory Framework" (Jan 2026)

Jiang et al., "NS-Mem: Advancing Multimodal Agent Reasoning with Long-Term Neuro-Symbolic Memory" (Mar 2026)

Huang et al., "AMA: Adaptive Memory via Multi-Agent Collaboration" (Jan 2026)

Multi-Agent Memory

Zhang et al., "G-Memory: Tracing Hierarchical Memory for Multi-Agent Systems" (Jun 2025)

Open-source repositories:

AtomMem - Learnable CRUD memory policies

A-MemGuard - Memory poisoning defense

AgentSys - OS-inspired memory isolation

G-Memory - Multi-agent graph memory

Awesome-GraphMemory - Curated graph-memory resources

Mem0 - Memory-as-a-service (41K stars)

Letta - Agent runtime with OS-inspired memory (21K stars)

Cognee - Knowledge graph memory engine

Zep / Graphiti - Temporal knowledge graph

LangMem - LangChain memory SDK